Unlocking Ultrafast Li-Ion Highways on Carbon Surfaces

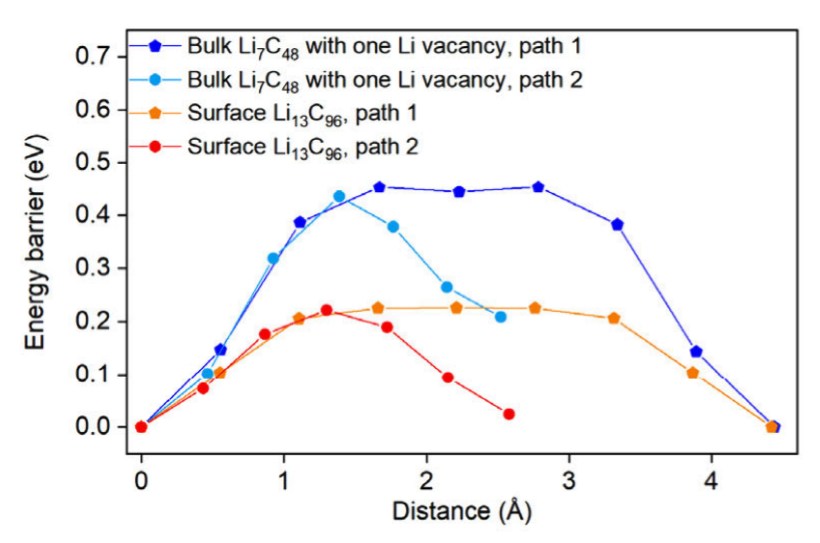

For decades, researchers have relied on carbonaceous materials like graphite and carbon black as workhorse components in lithium-ion batteries (LIBs). These carbons usually function as hosts for lithium intercalation, where Li ions slip between graphene layers. But there’s always been a catch: lithium diffusion through bulk carbon is relatively slow, limiting performance and requiring the help of liquid or solid electrolytes. In this work, we collaborated with the group of Prof Ping Liu and researchers at the University of Maryland, and the University of Houston to identify a completely new superionic lithium transport pathway along the surface of carbon materials. We found that in carbon blacks with high surface area but limited lithium storage capacity, such as Ketjen black (KB), lithium ions move astonishingly fast across the surface once lithiated. At room temperature, lithiated KB exhibited an ionic conductivity of 18.1 mS/cm, outperforming many leading solid electrolytes. Our group’s contributions (led by Ji Qi) is in the form of DFT calculations to show that lithium at the surface encounters much lower migration barriers (as low as 0.15 eV) than lithium inside bulk graphite. In effect, the carbon surface becomes a highway for ultrafast lithium transport. This surface-mediated conduction has several […]